- AI Weekly Wrap-Up

- Posts

- New Post 2-25-2026

New Post 2-25-2026

Top Story

China caught “distilling” US AI to catch up

This month, both OpenAI and Anthropic have released evidence that the companies behind highly-rated Chinese AI models have actually been illegally “heisting” the proprietary data of US model makers, in order to make the Chinese models perform on par with the US models.

The technique is called “distilling”, and it consists of sending a large number of queries to the stronger model, then training the weaker model on the answers. In this way, the weaker model “learns” how to reason like the stronger model, without having to do the work of actually building a stronger, better model. It clearly violates the terms of service of all the top US AI companies.

Anthropic found that its flagship Claude chatbot had been attacked with 16 million queries designed specifically to extract information on the model’s reasoning process. These attacks came from over 24,000 fraudulent accounts.

So, this was no accident, or casual exploration, but industrial-scale espionage. Both OpenAI and Anthropic are tightening up defenses against such attacks.

Clash of the Titans

Hegseth bullies Anthropic, cuts deal with Elon

US Secretary of Defense Pete Hegseth has been threatening Anthropic with dire consequences if they don’t allow the Pentagon to use Anthropic’s AI for “all lawful purposes.”

Anthropic’s AI model Claude underpins defense contractor Palantir, whose specialty is AI-powered big data analytics. Palantir’s products are very useful to the military for logistics and war-planning, to the point that Palantir, and therefore Anthropic, have a virtual monopoly on that kind of Pentagon business.

Anthropic got its start as the “ethical AI” company, and has made it clear that it will not permit use of its AI for 1) autonomous lethal force (bots that kill people on their own initiative), or 2) surveillance of the American public.

Hegseth has threatened that unless Anthropic permits use of its AI for “all lawful purposes”, he will cancel Anthropic’s $200 million/year contract with the Pentagon, and then classify Anthropic’s AI as a “supply-chain risk”, which effectively means that no defense contractors could use it either. Upping the pressure, and giving himself an alternative should Anthropic refuse to budge, Hegseth has approved a defense contract with Elon Musk’s AI model, Grok.

Perhaps the most disturbing aspect of this whole controversy is that Hegseth is using the full power of his office to ensure that he can 1) build killer robots that 2) spy on the American people.

Elon is totally happy to sign a contract to build killer robots that spy on the American people.

OpenAI and consulting firms to introduce AI agents into the enterprise

All three of the major US AI model makers (OpenAI, Anthropic, and Google) are now focusing on building AI Agents, that go beyond the chatbot and actually do tasks. OpenAI has laid out a strategy of using major consulting firms, like McKinsey, BCG, Accenture, and Capgemini for distribution, implementation, and support. Employees at each of the consulting firms are getting training from OpenAI engineers in order to become certified by OpenAI as trusted partners in implementation and support of AI agents. The vision is that AI Agents can function like employees of the business, semi-autonomously performing tasks.

OpenAI CEO Sam Altman is unleashing an army of AI consultants on US large businesses.

Fun News

Anthropic’s AI Fluency Index shows how to get better output from AI

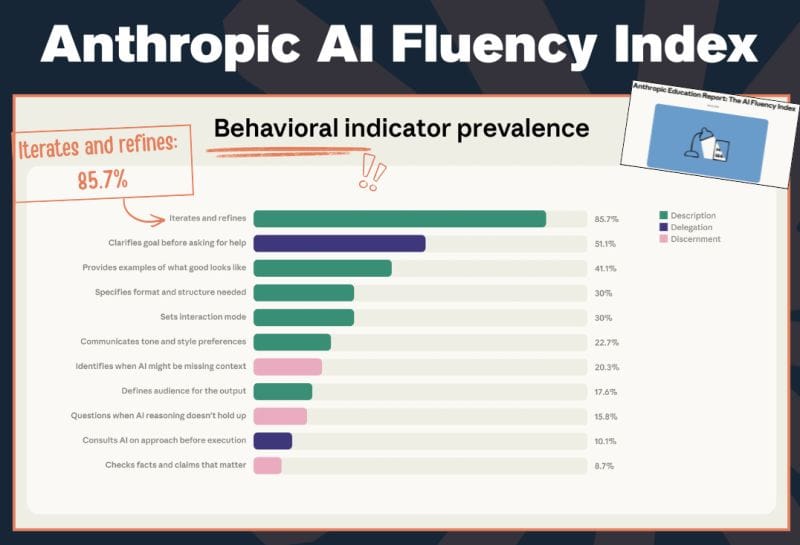

Anthropic loves to study how its users use its Claude chatbot. In a recent report, Anthropic studied how their more proficient users interact with their AI model. In the process, they developed what they are calling the AI Fluency Index, which consists of the types of interaction that separate AI heroes from the plodding crowd, in order of how often each behavior was found.

Iterating and refining - if at first your prompt does not succeed, try, try again

Clarifying goals at the start, before asking for help

Providing examples of what a good output would look like

Specifying format and structure needed

Setting the interaction mode

Communicating the tone and style expected

Identifying when the AI model may be missing context, or important information

Defining the audience for the output

Consulting the AI on approach before execution

Checking facts and claims that matter

Anthropic has measured the prevalence of types of interaction characteristic of power users.

Karpathy predicts that vibe coding will make the App Store obsolete

AI legend Andrej Karpathy is the former head of AI at Tesla, a former cofounder of OpenAI, and inventor of the term “vibe coding” for the use of AI to create computer code for applications.

The trend he identified and named just a year ago, the use of AI to produce computer software, has advanced so far that it is changing the jobs of software engineers from hand crafting code to supervising agents who write code. He now predicts that it will continue to advance, to the point that the App Store for your iPhone or Android phone will become obsolete.

Why search for, download, and learn to use commercial apps, when you can just ask your AI to code up an app on the fly, completely customized to you? Software may be entering an era when you no longer have to buy apps off the rack, but can order up a bespoke version on a whim.

Karpathy foresees a future of fully bespoke software, created as you need it, customized to you.

Robots

Robot swarms learn to fight fires in Australia

A team of engineers and scientists in Australia are developing technology to enable cooperative swarms of robots to fight fires. The goal is to fight fires effectively without putting the lives of firefighters at risk. Tele-operation of robots by human “drivers” is cumbersome, has built-in lags, and can be difficult to coordinate in the fast-changing environment of an uncontrolled fire. The Australian team is developing software to meld semi-autonomous drones and unmanned ground vehicles into a cooperating team that can respond to complex real-world conditions immediately, to extinguish the blaze.

An unmanned ground vehicle trundles off to extinguish a practice blaze with other bots.

Humanoid robots perform advanced martial arts at New Year gala

Just last August, at the World Humanoid Robot Games in Beijing, some of the bots malfunctioned so often and so hilariously that the video clips became instant internet memes. What a difference a half-year makes. This month, at a gala for the Chinese New Year, humanoid robots are shown performing advanced martial arts and dance moves with nary a slip-up. This is just one measure of how rapidly Chinese robotics is advancing. Widespread commercialization of these machines is likely several years away, but the promise of this technology seems undeniable.

Robots at the Chinese New Year gala have smooth moves.

AI in Medicine

Cardiologist wins 3rd place in Anthropic Hackathon

Earlier this month, Anthropic hosted an AI Hackathon, challenging software engineers around the world to develop working prototypes of new applications of AI. Over 13,000 entrants responded. Astonishingly, a patient care application “vibe coded” by a working cardiologist in just 7 days won Third Place.

Although not a professional coder, winner Micha Nedoszytko, a Polish cardiologist, did have a working knowledge of software development, and over the years he had coded a number of small apps that made his work easier. This background came in handy in correcting errors made by the AI software agent.

Still, his achievement is impressive, considering that he developed the patient care app in only 7 days, while working and traveling.

The app is called PostVisit, and it allows patients to upload a transcript of their visit with a doctor and ask questions about the information presented, to get more information on their conditions from trusted medical sources, and to upload information from wearable devices like a Fitbit or Apple Watch.

This is similar to what OpenAI’s new ChatGPT Health is designed to do, so it appears that something like this might very well be in your near future.

Cardiologist by day, vibe-coder by night, Dr Micha Nedoszytko outperformed over 13,000 other entrants to the Anthropic Hackathon.

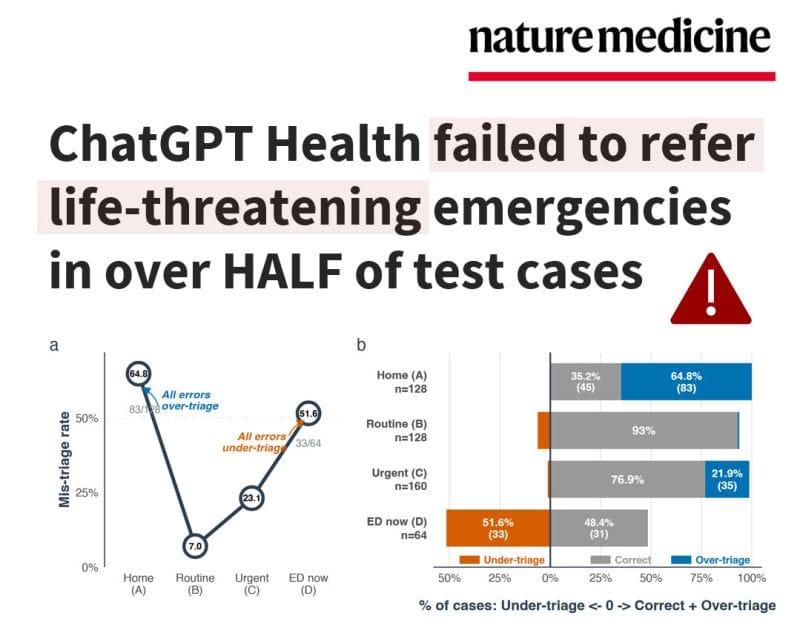

ChatGPT Health flubs triage for ER

On Monday, Nature Medicine published a study evaluating OpenAI’s newly-released consumer health app, ChatGPT Health. This app sits in a separate tab on the user’s ChatGPT screen, and will hold the user’s personal medical history in a secure “vault”, allowing the user to ask questions about their, tests, their medications, their conditions, and their symptoms. OpenAI claims that It is designed as a personal health coach, but is explicitly not designed to give medical advice.

That notwithstanding, the researchers proceeded to “stress test" ChatGPT Health as an ER triage nurse. The app was presented with 60 clinician-authored vignettes, and graded on its recommended course of action, from “don’t worry” to “go to the ER now.”

ChatGPT failed the stress test, reportedly under-triaging “gold-standard emergencies” 52% of the time. It was also very inconsistent with psychiatric emergencies, missing some and misclassifying lesser symptoms as emergent. No doubt this is the worst that ChatGPT Health will ever be, and a smarter, better model is just around the corner.

As a working physician, I’m kind of impressed by how much ChatGPT Health got right.

That's a wrap! More news next week.