- AI Weekly Wrap-Up

- Posts

- New Post 3-11-2026

New Post 3-11-2026

Top Story

Pentagon vs. Claude AI: the battle continues

The First Strike: On February 27, Secretary of Defense Pete Hegseth terminated Anthropic’s contract with the Pentagon and took the unprecedented step of designating them a “supply chain risk,” a label previously applied only to companies tied to adversarial regimes like China or Russia, suspected of slipping spyware into their products. Anthropic’s “crime”? Not permitting the Pentagon to use their technology for mass surveillance of the American people, or to autonomously kill people without a human in the loop.

Counter-Strike: Anthropic has filed suit in federal court to block the supply chain risk designation. The consensus of experts is that this designation “will not survive first contact with the legal system.” In a surprising twist, 30 AI researchers from OpenAI and Google - both of which are business rivals of Anthropic, and both of which are current contractors with the Pentagon - signed on to an amicus brief in support of Anthropic. The researchers argue that the supply chain risk designation is an arbitrary abuse of power to punish a domestic company for its ethical stance, and creates a chilling effect on necessary ethical debate on the uses of this new technology, which would ultimately undermine US scientific competitiveness.

Strike Three: Tragically, events in Iran may have proved Anthropic right. Despite the supposed ban, Claude is still being actively used for targeting air strikes in Iran. AI allows the military to “shorten the kill chain”, compressing the time from decision to action. Anthropic CEO Dario Amodei’s major objection to autonomous killing is that the AI models are not yet accurate and reliable enough to take on that responsibility. On February 28, a girls’ elementary school in Minab, Iran, was struck by a US Tomahawk missile, killing over 150 children. The mistake was due to outdated intelligence in the military databases that misidentified the school as being part of a nearby Islamic Revolutionary Guard complex. When hundreds or even thousands of targets are being proposed each day at machine speed, there is no time for human verification, and the AI, in effect, is acting autonomously, even if technically a human rubber-stamps the AI proposal.

Secretary of Defense Pete Hegseth demonstrates strategic use of hair gel.

Clash of the Titans

Microsoft’s new Copilot Cowork is based on Claude, not ChatGPT

It appears that 2026 will be the Year of the AI Agent, and on Monday Microsoft announced its new multi-agent platform, Copilot Cowork. Based on Anthropic’s industry-leading Claude Cowork, the new Microsoft feature will allow users to assign complex workflows to AI agents, either to proceed step-by-step, or in parallel. In addition, Microsoft is working to embed agents into its suite of productivity tools, including Word, Excel, PowerPoint, and Outlook email. Microsoft’s choice of Claude over OpenAI for this upgrade is telling, since Microsoft has invested over $13 billion into OpenAI to date. Once fast friends, the two companies have since diverged, as each tries to keep options open in the fast-moving environment of leading-edge AI.

Microsoft would like you to believe that Copilot Cowork is like Reese’s Pieces - 2 great tastes in one.

Visa just gave your AI Agent a debit card

People build AI Agents to get stuff done, and sometimes that takes a bit of cash. No worries - Visa just announced pre-funded virtual debit cards that you can give your agent to buy stuff for you, without having to give it access to your credit or your bank account. The Claude desktop app has the ability to create a card for your AI agent with a fixed amount of funds set by you, and the agent can then spend those funds on your behalf. Visa’s Trusted Agent Protocol handles the housekeeping chores of verifying that you are real, the money is real, the agent is yours, and the agent has your authorization. Premiere consulting firm McKinsey & Co. predicts that “agentic commerce” will grow to $3 trillion-$5 trillion annually by 2030.

Visa (and Mastercard) now allow AI Agents to wield virtual debit cards, funded by the user.

Fun News

Teens sell AI calorie counter app for $100+ million

Two high school friends, Zach Yadegari and Henry Langmack, just sold their AI-powered calorie counter app for an estimated $100+ million to MyFitnessPal, a much larger rival. The pair met in a coding camp, and decided to build an app together. The result was Cal AI, an app that took the tedium out of logging your daily calorie intake by estimating it from pictures of the meals you eat. The app went viral, and at the time of the sale had over 15 million downloads and an annual revenue of over $30 million. Team meetings at the company had to take place on Sunday nights, because the boys were maintaining 4.0 GPAs in their competitive high school. Nonetheless, Yadegari was rejected by all the elite schools he applied to (likely due to an unconventional personal essay), while Langmack is a current student at NYU. Yadegari is studying Philosophy at the University of Miami, because he found the business courses too simple for someone who was already a founder of a successful company.

Zach Yadegari, now 19, created a $100 million company before he graduated high school.

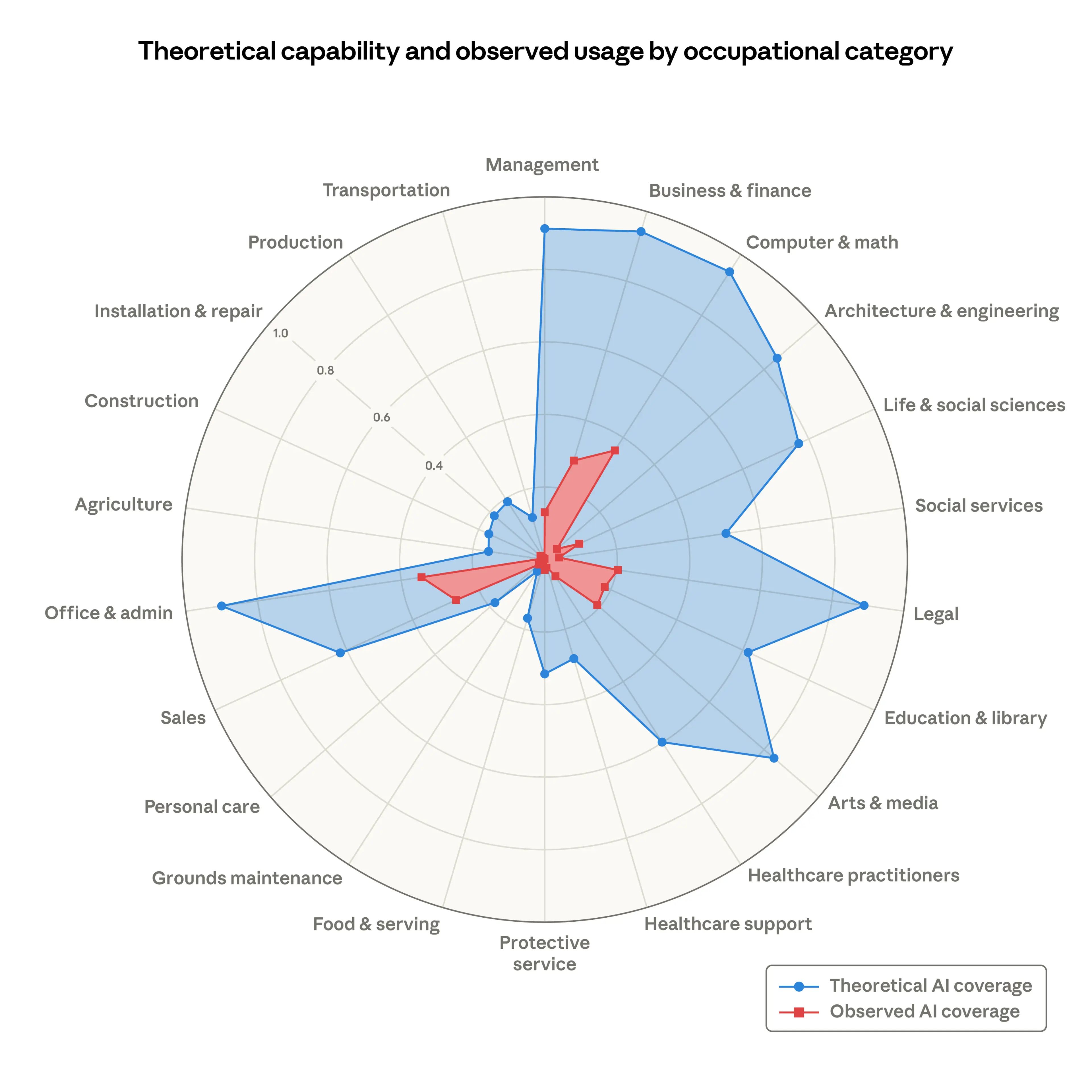

Anthropic releases report on the replaceability of jobs by AI

AI model-maker Anthropic has released a new study, in which they assess the fraction of the tasks of each job type that could theoretically be performed by AI, and compares it with the fraction of the tasks of those jobs that is actually being performed by AI somewhere. For example, in the chart below, the category of Healthcare Practitioners is rated as 60% replaceable by AI (blue shading), meaning that AI could perform 60% of the tasks involved in the work of a healthcare practitioner. (Probably most of the cognitive work.) But actual replacement of the work of healthcare practitioners is almost negligible (red shading.) Maybe because to date only human beings are licensed to practice medicine? (Physicians shouldn’t get too comfortable - the state of Utah has granted a provisional medical license to an AI bot to refill a list of high-volume, low risk medications. See story below in the AI in Medicine section.)

In contrast, the job category of Computer and Math is rated as almost 100% replaceable by AI, with observed replacement in the range of 40%. This is why tech companies are no longer hiring junior software engineers, but requiring their senior software engineers to supervise an army of AI coding assistants.

Robots

Physician performs robotic surgery on patient 1500 miles away

On February 11 a surgeon in London operated on a patient in the UK outpost of Gibraltar, 1500 miles away on the coast of Spain. The operation was performed using a surgical robotic device, teleoperated over a fiberoptic connection with only a 60 millisecond delay. Robotic surgery of prostate cancer has become the standard worldwide, because of the precise and delicate cuts it allows, crucial in this organ where a misstep can cause urinary incontinence and/or erectile dysfunction for the remainder of the patient’s life. Generally, the surgeon is seated at the device just inches away from the patient, guiding the robotic cutting tools with joysticks. In this instance, the surgeon was seated at the familiar console in London, but his motions were transmitted via the fiberoptic link to the robotic surgical device in Gibraltar. The operation went well, and the patient reported that he was fully recovered in 4 days. The surgeon, Dr. Prokar Dasgupta, head of the London Clinic’s Robotic Centre of Excellence, sees telesurgery as a way to offer world class health care to remote or underserved areas.

A surgeon in London performed robotic surgery for prostate cancer on a patient in Gibraltar.

Iran’s cheap drones are depleting US million-dollar interceptors

Iran’s $20,000 Shahed one-way “kamikaze” drones, launched from a truck, are depleting Patriot missile interceptors that cost $2 million each. In the first 5 days of the war against Iran, the US reportedly expended 800 Patriot missiles, more than the total used to date in the 4 years of the Ukraine war, at a cost of $2.4 billion. Lockheed Martin can only produce 50 new Patriot missiles a month; and ramping that number up could take a year or more due to complex supply chain issues. Scrambling, the US is trying to reverse engineer the Shahed and field its own low cost drone against Iran. The question is whether these low cost US drones will be effective against moving targets - the Shahed is programmed to fly to a fixed target, which is vastly simpler. Israel is deploying a new laser weapon, called the Iron Beam, which can neutralize an approaching drone at short range with an intense energy burst, at a cost of only a few dollars worth of electricity. The laser system has not yet been fully proven, and can be hampered by dust or rain.

A room full of assembled Shahed drones, Iran’s “AK-47 of the skies.”

AI in Medicine

AI Agent detects 6x more radiology follow-ups than standard methods

Parkland Health System in Dallas, Texas performs over 500,000 radiology scans a year. At this scale, small inefficiencies can have big consequences on both cost and patient health outcomes. Parkland identified that follow-up recommendations in radiology reports were being missed too often because their automated systems were too inflexible to reliably distinguish a recommendation from the other verbiage in the report. They developed an AI system that was trained to recognize follow-up recommendations in radiology reports, and place these recommendations in the radiology workflow for action. In an evaluation of a sample of 10,000 studies, The AI agent identified 6 times more follow-up recommendations than the usual system (513 versus 83), with an accuracy of 97%. Transforming unstructured or semi-structured information into actionable data is a strength of AI, and we will no doubt see more of these types of initiatives to improve clinical workflows.

Parkland Hospital in Dallas Texas

Researchers jailbreak Doctronic’s AI prescribing system

Researchers from the highly respected cybersecurity firm Mindgard were able to successfully hack the public physician chatbot of AI startup Doctronic. The researchers were able to trick the bot into recommending dangerously high drug doses, as well as spreading false information about vaccines, and reclassifying controlled substances as safe. This is a high profile takedown, because Doctronic has vaulted into prominence as the first AI company to obtain state licensure to prescribe medications, under an experimental program in the state of Utah.

It needs to be emphasized that the AI bot hacked by Mindgard is not identical to the AI system licensed in Utah, which has extra guardrails. These guardrails include a restricted set of drugs that it can prescribe, which can only be refills previously prescribed by a human doctor, and which cannot be controlled substances. In addition, Utah has required that the first 250 prescriptions of each allowed drug must be reviewed and validated by a human physician. Nonetheless, the underlying AI model is assumed to be the same in Utah and elsewhere, and this exploit by Mindgard is a cautionary tale for those who want to give life and death responsibility to today’s AI models.

AI prescribing is being tried in Utah under a new law encouraging AI innovation.

That's a wrap! More news next week.